Estimating Amazon Aurora Costs

Estimating costs for cloud resources can be complicated, and the more features in the service, the harder it can be to estimate accurately. Few services have as many different facets of pricing as AWS Aurora. Take a few minutes to learn how to make your money go as far as possible while also using an Aurora PostgreSQL or MySQL database.

What Is Amazon Aurora?

Amazon Aurora is a key component of Amazon RDS, the Amazon Web Services (AWS) suite of managed database services. It's designed to simplify the process of setting up and managing databases, reducing the maintenance overhead that can often be a burden.

The service builds on the open-source variants of the MySQL and PostgreSQL database engines. AWS provides adjustments and optimizations to the engines for these versions, providing performance gains, fault tolerance, and increased security, to name a few differences.

The service is described as a drop-in replacement for typical MySQL and PostgreSQL databases. The compatibility allows you to leverage their existing knowledge and tools while benefiting from Aurora's additional features and improvements.

AWS built Aurora to improve on the SQL architecture that hadn’t changed for decades. Old practices of needing to worry about page flushes and minimizing the impact of failed nodes in a cluster didn’t have a place in the cloud.

Amazon VP and CTO Werner Vogels discusses some of the approaches AWS took when designing Aurora in his blog post Amazon Aurora ascendant: How we designed a cloud-native relational database. From offloading redo logs to building the database system to work across a series of data centers in a region, Aurora is a database for the cloud.

The Challenge With Understanding Aurora Costs

As we discussed previously, there are a lot of variables to consider for RDS pricing. The apparent factors include instance size, storage size, and deployment type. Also, things like support and data transfer are typically not explicitly included in the cost equation.

AWS Aurora adds even more complexity by adding questions such as “Are you using serverless or traditional on-demand instances?” and “Do you want a normal cluster or an I/O optimized cluster for improved performance?”

Let's see how the Aurora pricing variables differ from the RDS ones.

How Aurora differs from regular Amazon RDS

Aurora has a few key differences from a normal RDS cluster: the ability to use a serverless deployment, global databases, and more.

The ability to use a serverless variant of the service is surely one of the most interesting differences. Aurora Serverless abstracts even more of the management burden from you, providing fast reactions to changes in load with automatic scaling and the entire suite of improvements provided by traditional Aurora. This makes Aurora Serverless a great option for unpredictable or bursty workloads.

If you have an application spanning multiple AWS regions, keeping your data in sync or keeping latency low on cross-region database queries is hard. Answering this need is the Aurora Global Database. This service allows for sub-second data replication with up to 16 read replicas per region. It also lets you customize your instance type in the secondary regions so you can run smaller nodes for your warm disaster recovery environment instead of needing to run the same instance type as your primary database.

Most database administrators know that a low disk alarm is one of the worst alerts you can get. With Aurora, AWS allows you to automatically scale storage up as the database nears the limit. Instead of requiring careful management of the autoscaling value to control costs, you are also only charged for the storage that is used. This is a great feature to remove a maintenance task for the team to allow them to focus on other tasks.

If you use the MySQL-compatible version of Aurora, you can also use the Backtrack feature to roll back and recover from error states without a full backup. While there are some limitations, such as not being able to use binary logging, backtrack is a great feature for being able to essentially rewind changes to the database.

How to Estimate Your Aurora Costs

Using the AWS Pricing Calculator is the best way to get an idea of potential costs for the Aurora deployment. You can select the deployment type, I/O configuration, and additional features to have a good idea of what the bill may be at the end of the month. Remember that not all regions charge the same amount for the resources. This is true even for multi-region deployments like those used in a Global Database.

Define app architecture

Having an idea of the architecture of the application the database is supporting is crucial. How many deployments are there going to be? Is the app going to be a single region or many? Is one availability zone (AZ) sufficient, or should all AZs in a region be used? These are some basic questions to ask as the picture of the system design comes into focus. The answer to each one of these questions will also impact cost, so that should be a consideration when making the decision.

Calculate data storage and throughput needs

As noted earlier, different regions have different costs, so be sure to check the storage and data transfer sections of the pricing page or use the pricing calculator for an estimation. The data transfer costs vary depending on whether the data is going out to the internet or between AWS regions.

On the topic of storage, be sure to know what the application's expected I/O rate is. Costs can range from $0.20 to $0.28 per million requests. For high I/O requirements, consider using Aurora I/O Optimized, which costs a little more from a storage perspective but does not charge for the I/O rate. Amazon claims up to 40 % cost savings, though some companies have seen over 50 % savings when switching to I/O Optimized.

Determine Aurora usage type and contract type

As with knowing the system’s architecture, having an idea of data needs is also critical. Aurora supports on-demand and reserved instances just as normal RDS does. However, AWS offers Aurora Serverless, too, for spiky and unpredictable loads.

Typical on-demand instances for Aurora operate much like EC2 and RDS. Select the instance type based on the requirements. Previously, we wrote about the different types and architectures. There are options for memory or compute-optimized, ARM-based Graviton instances and bursty load requirements. Once a decision is made and the team is confident in it, reserved instances can be purchased to realize more than 50 % savings in some cases.

Aurora Serverless is a good option in some cases. There isn’t a concept of instance type with Aurora Serverless. Instead, AWS introduced Aurora Capacity Units (ACUs) that allow granular control of resources for each deployment. Each ACU has approximately 2 GiB of memory, corresponding CPU, and network utilization.

When determining which Aurora model to choose, it’s important to remember the requirements of the application. Services that require an “always-on” model will benefit from managed instances and likely reserved instances since running serverless all the time can increase costs significantly, as noted in this deep dive into Aurora Serverless.

Calculator additional feature costs

There are a lot of features to consider with Aurora. Most of them have a cost associated with them. For example, Global Database is charged per million replicated write I/Os. If using Aurora MySQL, Backtrack is charged per million record changes.

Snapshots and backups are similar to traditional RDS, where you are charged for data transfer and per GB stored per month respectively.

Finally, consider if you may need professional support from Amazon. This is offered in the form of Support contracts, starting from $100 per month for business support and $5,500 per month for Enterprise support.

Validate assumptions in a pre-prod environment

Before committing to a production environment and contractual agreements for support or reserved instances, consider using a pre-production environment to verify calculations. Even if the pre-prod environment is a significantly smaller scale, it can help validate the calculations made.

If there is a discrepancy between what is expected and what is observed, using Cost Explorer to explore detailed resource usage can help fill the gaps of what was missing.

Set up billing alerts in production

After deciding on the features, architecture, instance types, and more, monitoring costs is a great practice. In the billing console, budgets and anomaly alerts can be configured on a granular level to notify you of potentially costly and surprising bills at the end of the month.

A Simpler Pricing Model for Time-Series Data and Other Demanding Workloads

Cost calculation in AWS is complicated. In fact, there’s been an influx of cloud economist positions to help companies work through the intricacies of managing costs for their cloud deployments. We wrote this piece on reducing Amazon Aurora costs, but there surely must be a better way, right?

You shouldn’t need to be an expert in billing and finance to run a database. That’s why our bill only has two elements, compute and storage, making your Timescale bill transparent and predictable.

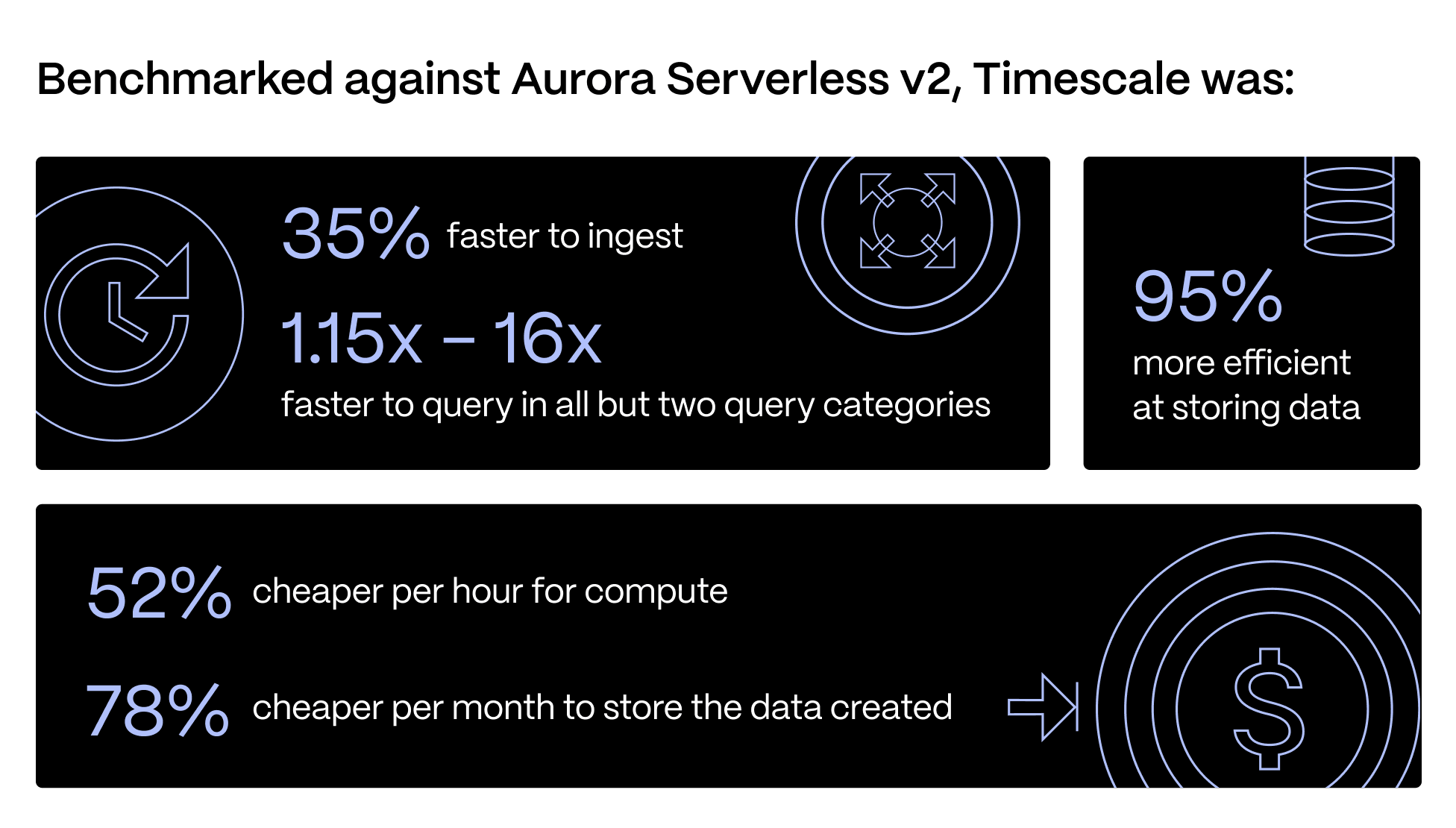

Timescale can be used as a normal PostgreSQL database with the Dynamic PostgreSQL service (so you can save costs by never having to overprovision for peak load again), but we can do even more for you as a database for time series, events, analytics, and other demanding workloads. Timescale has faster queries, faster ingest, and better pricing for storage over RDS and Aurora Serverless.

Complex Aurora billing models shouldn’t stop you from being successful. Sign up for a free Timescale trial today and experience our PostgreSQL platform's simplicity, efficiency, and unparalleled benefits. Estimate how much you would spend to scale your PostgreSQL experience to the next level.