Timescale Parameters You Should Know About (and Tune) to Maximize Your Performance

One of the best parts of being a support engineer at Timescale is working with our customers to get a deep understanding of their use case, making them successful on our cloud service, Timescale. Along the way, we’ve picked up some knowledge on a few key parameters that you should be aware of and tune based on your use case to maximize your performance. In this blog post, we’ll present them to you, sharing our advice on what to look for.

Hopefully, this advice will help you make the most of Timescale. Even if you are not a Timescale customer yet, this information may also be useful for you to apply in your own environment—and if you’re curious, you can also try Timescale completely for free. You won’t need a credit card to start the trial, and you'll have access to support the entire time.

One caveat to the information below: the optimal setting is typically found via some trial and error. There are too many variables in each user's environment and database configuration for us to be able to write a blog that gives you the optimal settings for all use cases. Yet, we aim to provide some general guidelines that you may find useful.

What in The World Are Background Workers?

Let’s get started going through our list of key Timescale parameters covering a frequently asked one: background workers.

Do you ever see warnings in your log such as...

"WARNING: failed to launch job [n] "Telemetry Reporter [n]": out of background workers"

or,

"Compression Policy [n]": failed to start a background worker?

If the answer is yes, you'll find this information useful.

Background workers perform background processing for operations specific to TimescaleDB (both live queries and background jobs, all kinds of User-Defined Actions/Policies).

The background worker's settings need to be tuned to get the most out of TimescaleDB—issues often arise when worker settings are not properly set. Some of the issues we see often caused by a misconfiguration of background workers are:

- User-Defined Actions are not working properly.

- Continuous aggregates are not working properly.

- Compression policies are not working properly.

- The retention policies are not working properly.

- Database size rapidly increases, due to failures in compression and the data retention policies.

To avoid these issues, these are three key settings you should look at and our general rule of thumb on how to configure them.

timescaledb.max_background_workers

You should configure the timescaledb.max_background_workers setting to be equal to the sum of your total number of databases + the total number of concurrent background workers you want running at any given point in time.

Note that there is a limit of 1000 timescaledb.max_background_workers on Timescale. If you have significantly more than 1000 jobs running simultaneously, we advise you to distribute the execution of your jobs.

max_parallel_workers

By default, the max_parallel_workers setting corresponds to the number of CPUs available.

For larger queries, PostgreSQL automatically uses parallel workers if they are available. Increasing max_parallel_workers can improve query performance for large queries that trigger the use of parallel workers.

max_worker_processes

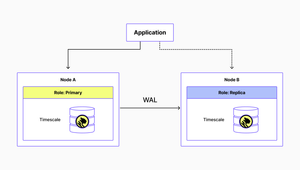

max_worker_processes defines the total pool of workers available to both background and parallel workers, as well as a small number of built-in PostgreSQL workers.

max_worker_processes should be AT LEAST 3 (required for checkpointer, WAL writer, and vacuum processes) plus the sum of the background workers and parallel workers:

max_worker_processes= 3 +timescaledb.max_background_workers+max_parallel_workers.

To learn more about timescaledb.max_background_workers, max_parallel_workers, and max_worker_processes, check out our docs.

What to do About Memory Allocation?

Now that we've covered workers, let's move on to the next topic: memory allocation.

Do You Have Heavy Queries That Aren’t Performing as Expected?

Or perhaps you are hitting out-of-memory (OOM) events frequently? If the answer is yes, it may be time to review your work_mem setting.

work_mem defines the amount of memory to be used by a query operation (such as a sort or hash table) before writing to temporary disk files. Sort operations are used by ORDER BY, DISTINCT, and merge joins.

The default value for this setting is four megabytes (4MB). If several operations (sort or hash) are running concurrently, then each operation will be allowed to use up to work_mem of memory. So, the total memory used could be many times the value of work_mem—this situation should be considered when specifying this setting.

If work_mem is properly tuned, major sort operations are much faster than writing and reading to disk files. If you set work_mem higher than recommended, it can badly affect the overall available system memory, which can lead to out of memory (OOM) events.

To avoid these issues, we recommend setting work_mem as:

work_mem= (25% of RAM) / max_connections.

However, work_mem is assigned whenever a query requests a sort, hash (or any other structure that requires space allocation), which can occur multiple times per query. It might be safer to assume that max connections * 2 is the amount of memory (RAM) that will be used in practice while devising the recommended work_mem. But please, consider that this is dependent on your workload and query patterns.

Are Maintenance Operations Taking a Long Time to Execute?

maintenance_work_mem is another good parameter to look at. It defines the amount of memory to be used by maintenance operations. Maintenance operations include VACUUM, CREATE INDEX, ALTER TABLE ADD FOREIGN KEY operations.

Setting maintenance_work_mem to less than recommended may lead to a decreased performance of vacuuming and database dump/restore operations. For example, restoring the database using pg_restore will usually involve creating some indexes, so it might be worthwhile to increase maintenance_work_mem while working with pg_restore.

The default value for maintenance_work_mem is 64MB. Our recommendation is to set it up to a higher value than work_mem in order to improve performance for VACUUM operations and database dump/restore. A good guideline:

maintenance_work_mem= 0.05 * total RAM.

While configuring maintanace_work_mem, you should note that memory for shared_buffers and work_mem is already allocated, so you should have enough memory to accommodate: concurrent maintenance operations during peak time * maintenance_work_mem.

maintenance_work_mem follows the same guidelines as the work_mem setting, i.e the configuration is per operation, and the necessary memory is allocated on the run. If you are too aggressive with the settings, you may discover out-of-memory errors. It's better to start small, and only increase it once you've done enough monitoring to determine the system's peak memory usage.

Are There Memory Considerations if my Workload is Primarily READ Operations vs Primarily WRITE Operations?

Another question we hear often.

shared_buffer defines the amount of memory the database server uses for shared memory buffers. The default shared_buffer is typically 128 megabytes (128MB), which can be quite low for any typical system.

A reasonable amount to start with is 25% of RAM:

shared_buffer= 25% of RAM.

Consider that PostgreSQL requires free memory for system buffers, sort, and maintenance operations, so it is not advisable to set shared_buffers it to a majority of RAM. Nonetheless, shared_buffer can be set to higher than recommended if your use case is mostly READ operations. On the contrary, setting shared_buffer higher than recommended will decrease performance if your use case is primarily WRITE operations, as all the contents from shared_buffer must be flushed during write operations.

✨ Editor's note ✨ If you want to big deeper into shared buffers in PostgreSQL, check out our blog post series on the topic.

“Out of Shared Memory” Errors?

shared_buffer is the biggest chunk of shared memory that PostgreSQL uses, but there might be other components that come into play. One of those components is the Lock Space that’s used to store different types of locks (shared across user processes) by the database.

There might be a few reasons you might run out of shared memory, but if your error message in the PostgreSQL logs follows a hint around increasing max_locks_per_transaction, which is a parameter that controls the average number of object locks allocated for each transaction, this section might interest you.

- If the error message that appears in the logs looks like this,

ERROR: out of shared memory, you might need to increasemax_locks_per_transaction.

The above error can cause queries to fail, as the database can’t grant any more locks. A good check, once you know you’re hitting this, is to see if you’re opening an unbounded number of transactions and never closing them, or maybe they are long-running and touching a lot more objects (eg. tables, indexes, etc). But, if your application really needs a lot more locks, you can tune the parameter max_locks_per_transaction. The default value stands at 64—you might need to raise this value.

The recommendation is to choose a number equal to double the maximum number of chunks you can reasonably anticipate having in a hypertable while allowing for some growth. This way we can account for the fact that a hypertable query will typically use as many locks as there are chunks in the hypertable, or twice as many if an index is used. The chunks_detailed_size command allows you to view the number of chunks you currently have.

A Practical Example: Using This Knowledge To Diagnose And Fix Performance Problems

To finish up, we’d like to take a recent example of an issue reported to us (related to the parameters we talked about in this post) and explain how we approached the problem. You can apply the same process to try to fix the performance issues you may be seeing in your own use case.

Problem Description

Disk usage is increasing rapidly. Unsure what’s causing it.

Analysis

Checking the logs, we found frequent mentions of "Compression Policy [N]": out of background workers.

We determined that the jobs related to compression (and also retention policy) were failing, leading to an unexpected increase in disk usage.

Relevant Settings

To review the current background worker settings, we used the following queries:

show timescaledb.max_background_workers;show max_worker_processes;show max_parallel_workers;

To confirm the current job execution metrics, we used the following queries:

select count(*) from timescaledb_information.jobs;select * from timescaledb_information.jobs;select * from timescaledb_information.job_stats;

Finally, we wanted to understand the current job distribution pattern, so we used the following query to get a breakdown of each jobs next_start:

select date_trunc('minute', next_start), count(*) from timescaledb_information.jobs group by 1 order by 1,2 desc;

Assessment

On review of the relevant settings, we found that there were over two thousand user-defined jobs being executed on the database, but timescaledb.max_background_workers was set to 8.

We also found that the job distribution was extremely narrow, in that a thousand jobs were set to execute within a 5-minute window when other times of the day had little to no activity scheduled.

Recommendation

Our first recommendation was to distribute the workload more evenly throughout the day. This can be done by using the next_start option:

SELECT alter_job(<job_id>, next_start => 'YYYY-MM-DD HH:MI:00.0+00');

This allows us to set a reasonable number of background workers to begin with. If jobs continue to fail, then we can increase up to a maximum of 1000 background workers. We decided to start with 100 background workers, and 16 parallel workers, which gave us the following settings:

timescaledb.max_background_workers = 100max_worker_processes = 116max_parallel_workers = 16

Conclusion

If you’re seeing some unexpected behavior in your Timescale databases, review the parameters listed in this post, and apply the advice we've shared with you. Hopefully, you’ll find it useful!

If you still have doubts and would like to get in touch with our support team, it will be our pleasure to work through some of your questions together. If you are a current user of Timescale, you have direct access to support at no extra cost—just shoot us a message at [email protected]. If you’re not yet a Timescale user, you can use it for free for 30 days, no credit card required—and you have full access to our support team during your trial.

We look forward to working with you!